~

Where they burn books, they will also ultimately burn people.

—Heinrich Heine

You Morons

In early March 2021, a group of “tech and art enthusiasts” who make up the company Injective Protocol[1] burnt Banksy’s work Morons (White) (2006), which they had previously acquired from Tagliatella Galleries for $95,000.[2] At first sight, the burning could be read as performance art in the spirit of Banksy’s Morons (White), which shows an art auction where a canvas featuring the text “I CAN’T BELIEVE YOU MORONS ACTUALLY BUY THIS SHIT” is up for sale (and going for $750,450). As such, the performance would take further Banksy’s own criticism of the art market, a market whose dialectic has easily reappropriated Banksy’s criticism as part of its norm and turned it into economic value. The burning of the Banksy would then seek to more radically negate the value of the work of art that Banksy’s Morons (White) challenges but cannot quite escape as long as it remains a valuable work of art.

However, such negation was not the goal of the burning. As the tech and art enthusiast who set the Banksy aflame explained, the burning was in fact accomplished as part of a financial investment, and to inspire other artists. In other words, the burning in fact confirmed the art market’s norm rather than challenging it, and it encouraged other artists to make work that does the same. You see, before Banksy’s Morons (White) was burnt, Injective Protocol had recorded the work as what is called a non-fungible token or NFT in the blockchain. This means that for the work’s digital image, a unique, original code was created; that code—which is what you buy if you buy and NFT–is the new, original, NFT artwork, henceforth owned by Injective Protocol even if digital copies of Banksy’s Morons (White) of course still circulate as mere symbols of that code.[3] Such ownership, and the financial investment as which it was intended, required the burning of the material Banksy because Injective Protocol sought to relocate the primary value of the work into the NFT artwork—something that could only be accomplished if the original Banksy was destroyed. The goal of the burning was thus to relocate the value of the original in the derivative, which had a bigger financial potential than the original Banksy.

The Banksy burning was perhaps an unsurprising development for those who have an interest in art and cryptocurrencies and have been following the rise of cryptoart. Cryptoart is digital art that is recorded in the blockchain as an NFT. That makes cryptoart “like” bitcoin, which is similarly recorded in the blockchain: each bitcoin is tied to a unique, original code that is recorded in a digital ledger where all the transactions of bitcoin are tracked. As an NFT, a digital artwork is similarly tied to a unique, original code that marks its provenance. The main difference between bitcoin and an NFT is that the former, as currency, is fungible, whereas the latter, as art, as not.[4] Now, NFTs were initially created “next to” already existing non-digital art, as a way to establish provenance for digital images and artworks. But as such images and artworks began to accrue value, and began to comparatively accrue more value than already existing non-digital art, the balance in the art market shifted, and NFTs came to be considered more valuable investments than already existing works of non-digital art.

The burning of Banksy’s Morons (White) was the obvious next step in that development: let us replace the already existing work of non-digital art by an NFT, destroy the already existing work of non-digital art, and relocate the value of the work into the NFT as part of a financial investment. It realizes the dialectic of an art market that will not hesitate to destroy an already existing non-digital work of art (and replace it with an NFT) if it will drive up financial value. The auction houses who have sold NFTs are complicit to this process.

Crypto Value = Exhibition Value + Cult Value

The digital may at some point have held the promise of a moving away from exceptionalism–the belief that the artist and the work of art are exceptional, which is tied to theories of the artist as genius and the unresolved role of the fake and the forgery in art history–as the structuring logic of our understanding of the artist and the work of art. The staged burning of the Banksy does not so much realize that promise as relocate the continued dominance of exceptionalism—and its ties to capitalism, even if the work of art is of course an exceptional commodity that does not truly fit the capitalist framework—in the digital realm. The promise of what artist and philosopher Hito Steyerl theorized as “the poor image”[5] is countered in the NFT as a decidedly “rich image”, or rather, as the rich NFT artwork (because we need to distinguish between the NFT artwork/ the code and the digital image, a mere symbol that is tied to the code). Art, which in the part of its history that started with conceptual art in the early 1970s had started realizing itself—parallel to the rise of finance and neoliberalism–as a financial instrument, with material artworks functioning as means to hedge against market crashes (as James Franco’s character in Isaac Julien’s Playtime [2014] discusses[6]), has finally left the burden of its materiality behind to become a straight-up financial instrument, a derivative that has some similarities to a cryptocurrency like bitcoin. Art has finally realized itself as what it is: non-fungible value, one of finance’s fictions.[7]

Although the video of the Banksy burning might shock, and make one imagine (because of its solicitation to other tech enthusiasts and artists) an imminent future in which all artworks will be burnt so as to relocate their primary value in an NFT tied to the artwork’s digital image, such a future actually does not introduce all that much difference with respect to today. Indeed, we are merely talking about a relocation of value, about a relocation of the art market. The market’s structure, value’s structure, remain the same. In fact, the NFT craze demonstrates how the artwork’s structuring logic, what I have called aesthetic exceptionalism,[8] realizes itself in the realm of the digital where, for a brief moment, one may have thought it could have died. Indeed, media art and digital art more specifically seemed to hold the promise of an art that would be more widely circulated, where the categories of authorship, value, and ownership were less intimately connected, and could perhaps even—see Steyerl; but the argument goes back to Walter Benjamin’s still influential essay on the copy[9]—enable a communist politics. Such a communist politics would celebrate the copy against the potentially fascist values of authenticity, creativity, originality, and eternal value that Benjamin brings up at the beginning of his essay. But no: with NFT, those potentially fascist values are in fact realizing themselves once again in the digital realm, and in a development that Benjamin could not have foreseen “the aura” becomes associated with the NFT artwork—not even the digital image of an artwork but a code as which the image lies recorded in the blockchain. Because the NFT artwork is a non-fungible token, one could argue that it is even more of an original than the digital currencies with which it is associated. After all, bitcoin is still a medium of exchange, whereas an NFT is not. In the same way that art is not money, NFT is not bitcoin, even if the NFT needs to be understood (as I suggested previously) as one of finance’s fictions.

What’s remarkable here is not so much that a Banksy is burnt, or that other artworks may in the future be burnt. What’s remarkable is the power of aesthetic exceptionalism: an exceptionalism so strong that it can even sacrifice the material artwork to assert itself.

Of course, some might point out—taking Banksy’s Morons (White) as a point of departure–that Banksy himself invited this destruction. Indeed, at a Sotheby’s auction not so long ago, Banksy had himself already realized the partial destruction of one of his works in an attempt to criticize the art market[10]—a criticism that is evident also in the work of art that Injective Protocol burnt. But the art market takes such avant-garde acts of vandalism in stride, and Banksy’s stunt came to function as evidence for what has been called “the Banksy effect”[11]: your attempt to criticize the art market becomes the next big thing on the art market, and your act of art vandalism in fact pushes the dollar value of the work of art. If that happens, the writer Ben Lerner argues in an essay about art vandalism titled “Damage Control”,[12] your vandalism isn’t really vandalism: art vandalism that pushes up dollar value isn’t vandalism. Banksy’s stunt was an attempt to make art outside of the art market, but the attempt failed. The sale of the work went through, and a few months later, one can find the partially destroyed artwork on the walls of a museum, reportedly worth three times more since the date when it was sold. For Lerner, examples like this open up the question of a work of art outside of capitalism, a work of art from which “the market’s soul has fled”,[13] as he puts it. But as the Banksy example shows, that soul is perhaps less quick to get out than we might think. Over and over again, we see it reassert itself through those very attempts that seek to push it out. One might refer to that as a dialectic—the dialectic of avant-garde attempts to be done with exceptionalist art. Ultimately they realize only one thing: the further institutionalization of exceptionalist art.

That dialectic has today reached a most peculiar point: the end of art that some, a long time ago, already announced. But none of those arguments reached quite as far as the video of the Authentic Banksy Art Burning Ceremony that was released in March: in it, we are quite literally witnessing the end of the work of art as we know it. It shows us the “slow burn”, as the officiating member of Injective Protocol puts it, through which Banksy’s material work of art—and by extension the material work of art at large—disappears (and has been disappearing). At the same time, this destruction is presented as an act of creation—not so much of a digital image of the Banksy work but of the NFT artwork or the code that authenticates that digital image, authors it, brands it with the code of its owners. So with the destruction of Banksy’s work of art, another work of art is created—the NFT artwork, a work that you cannot feature on your wall (even if its symbolic appendage, the digital image of the Banksy, can be featured on your phone, tablet, or computer and even if some owners of the NFT artwork might decide to materially realize the NFT artwork as a work that can be shown on their walls). But what is the NFT artwork? It strikes one as the artwork narrowed down to its exceptionalist, economic core, the authorship and originality that determine its place on the art market. It is the artwork limited to its economic value, the scarcity and non-fungibility that remain at the core of what we think of as art. This is not so much purposiveness without purpose, as Immanuel Kant famously had it, but non-fungible value as a rewriting of that phrase. Might that have been the occluded truth of Kant’s phrase all along?

In Kant After Duchamp,[14] which remains one of the most remarkable books of 20th-century art criticism, Thierry de Duve shifted the aesthetic question from “is it beautiful?” (Kant’s question) to “is it art?” (Duchamp’s question, which triggers de Duve’s rereading of Kant’s Critique of Judgment). It seems that today, one might have to shift the question once again, to situate Kant after Mike Winkelmann, the graphic designer/ NFT artist known as Beeple whose NFT collage “Everydays: The First 5000 Days” was sold at a Christie’s auction for $69,346,250. The question with this work is not so much whether it is beautiful, or even whether it is art; what matters here is solely its non-fungible value (how valuable is it, or how valuable might it become?), which would trigger yet another rereading of Kant’s third critique. Shortly after the historic sale of Beeple’s work was concluded, it was widely reported that the cryptocurrency trader who bought the work may have profited financially from the sale, in that the trader had previously been buying many of the individual NFTs that made up Beeple’s collage—individual NFTs that, after the historic sale of the collage, went up significantly in value, thus balancing out the expense of buying the collage and even yielding the trader a profit. What’s interesting here is not the art—Beeple’s work is not good art[15]—but solely the non-fungible value.

It seems clear that what has thus opened up is another regime of art. In his essay on the copy, Benjamin wrote of the shift from cult value, associated with the fascism of the original, to exhibition value, associated with the communism of the copy. Today, we are witnessing the anachronistic, zombie-like return of cult value within exhibition value, a regime that can be understood as the crypto value of the work of art. That seems evident in the physical token that buyers of Beeple’s NFTs get sent: in its gross materialism—it comes with a cloth to clean the token but that can also be used “to clean yourself up after blasting a hot load in yer pants from how dope this is!!!!!!111”; a certificate of authenticity stating “THIS MOTHERFUCKING REAL ASS SHIT (this is real life mf)”; and a hair sample, “I promise it’s not pubes”–, it functions as a faux cultic object that is meant to mask the emptiness of the NFT. Assuaging the anxieties, perhaps, of the investors placing their moneys into nothing, it also provides interesting insights into the materialisms (masculinist/ sexist, and racist—might we call them alt-right materialisms?) that reassert themselves in the realm of the digital, as part of an attempt to realize exceptionalism in a commons that could have freed itself from it.[16] As the text printed on the physical token has it: “strap on an adult diaper because yer about to be in friggn’ boner world usa motherfucker”.

NFT-Elitism

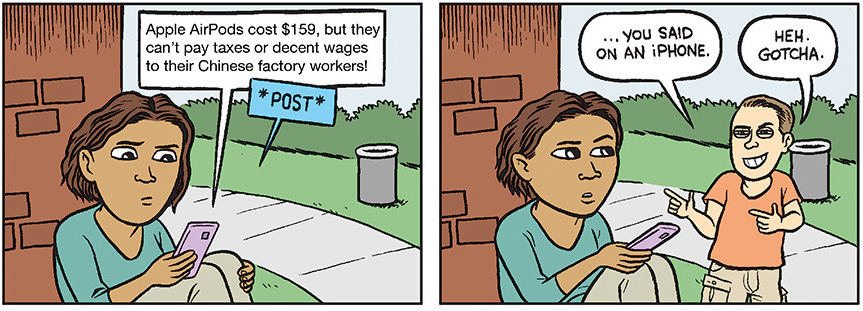

It’s worth asking about the politics of this. I have been clear about the politics of aesthetic exceptionalism: it is associated with the politics of sovereignty, which is a rule of the one, a mon-archy, that potentially tends abusive, tyrannical, totalitarian. That is the case for example with exceptionalism in Carl Schmitt, even if it does not have to be the case (see for example discussions of democratic exceptionalism).[17] With the NFT artwork, the politics of aesthetic exceptionalism is realizing itself in the digital realm, which until now seemed to present a potential threat to it. It has nothing to do with anti-elitism, or populism; it is not about leaving behind art-world snobbery, as some have suggested. It is in fact the very logic of snobbery and elitism that is realizing itself in the NFT artwork, in the code that marks originality, authenticity, authorship and ownership. Cleverly, snobbery and elitism work their way back in via a path that seems to lead elsewhere. It is the Banksy effect, in politics. The burning of the Banksy is an iconoclastic gesture that preserves the political theology of art that it seems to attack.[18] This is very clear in even the most basic discourse on NFTs, which will praise both the NFT’s “democratic” potential—look at how it goes against the elitism of the art world!—while asserting that the entire point of the NFT is that it enables the authentification that once again excludes fakes and forgeries from the art world. Many, if not all of the problems with art world elitism continue here.

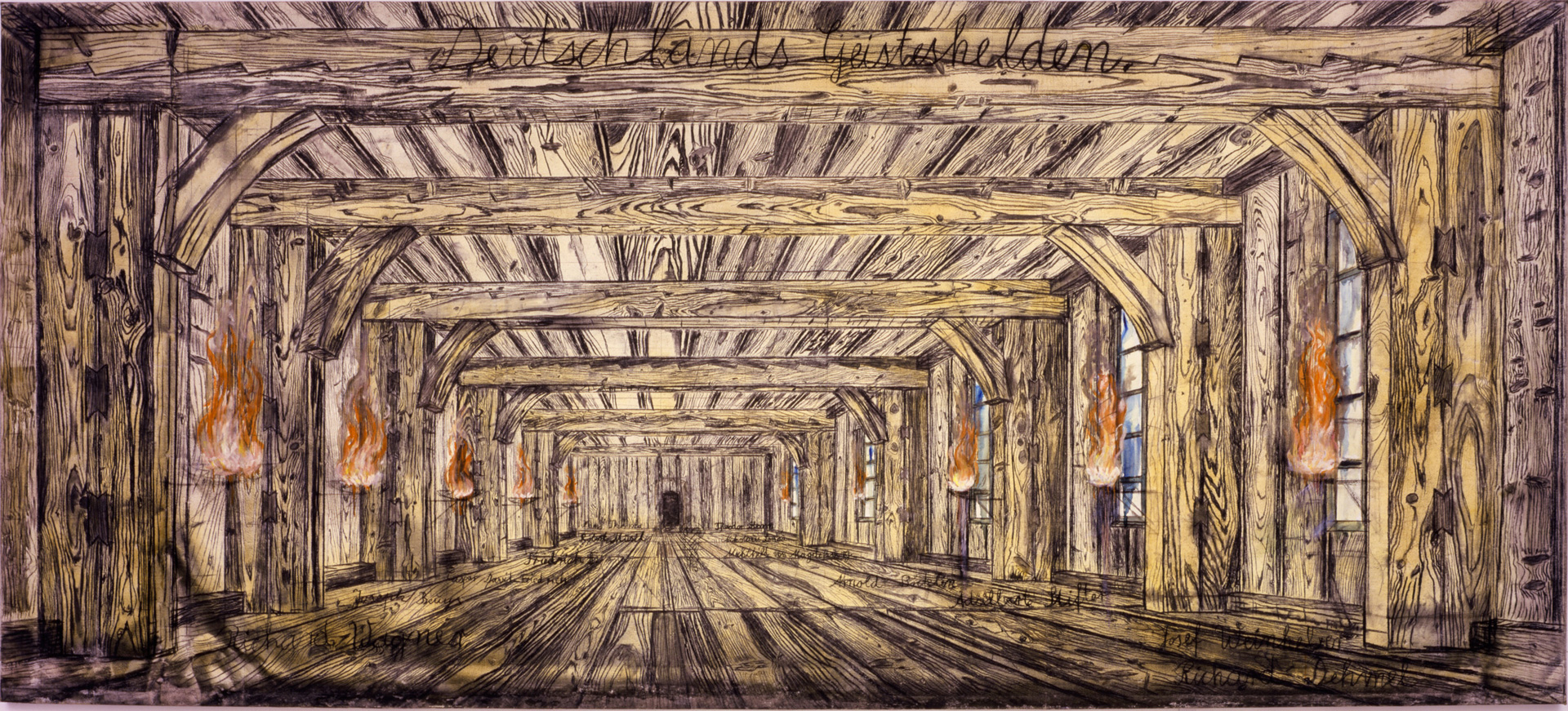

With the description of NFT artworks as derivatives, and their understanding as thoroughly part of the contemporary financial economy, the temptation is of course to understand them as “neoliberal”—and certainly the Banksy burning by a group of “tech and art enthusiasts” (a neo-liberal combo if there ever was one) seems to support such a reading. But the peculiar talk about authenticity and originality in the video of the Banksy burning, the surprising mention of “primary value” and its association to the original work of art (which now becomes the NFT artwork, as the video explains), in fact strikes one as strangely antiquated. Indeed, almost everything in the video strikes one as from a different, bygone time: the work, on its easel; the masked speaker, a robber known to me from the tales of my father’s childhood; the flame, slowly working its way around the canvas, which appears to be set up in front of a snowy landscape that one may have seen in a Brueghel. Everything is there to remind us that, through the neoliberal smokescreen, we are in fact seeing an older power at work—that of the “sovereign”, authentic original, the exceptional reality of “primary value” realizing itself through this burning ritual that marks not so much its destruction but its phoenix-like reappearance in the digital realm. In that sense, the burning has something chilling to it, as if it is an ancient ritual marking the migration of sovereign power from the material work of art to the NFT artwork. A transference of the sovereign spirit, if you will, and the economic soul of the work of art. For anyone who has closely observed neoliberalism, this continued presence of sovereignty in the neoliberal era will not come as a surprise—historians, political theorists, anthropologists, philosophers, and literary critics have shown that it would be a mistake to oppose neoliberalism and sovereignty historically, and in the analysis of our contemporary moment. The aesthetic regime of crypto value would rather be a contemporary manifestation of neoliberal sovereignty or of authoritarian neoliberalism (the presence of Trump in Beeple’s work is worth noting).

Art historians and artists, however, may be taken aback by how starkly the political truth of art is laid bare here. Reduced to non-fungible value, brought back to its exceptionalist economic core, the political core of the artwork as sovereign stands out in its tension with art’s frequent association with democratic values like openness, equality, and pluralism. As the NFT indicates, democratic values have little to do with it: what matters, at the expense of the material work of art, is the originality and authenticity that enable the artwork to operate as non-fungible value. Part of finance’s fictions, the artwork thus also reveals itself as politically troubling because it is profoundly rooted in a logic of the one that, while we are skeptical of it in politics, we continue to celebrate aesthetically. How to block this dialectic, and be done with it? How to think art outside of economic value, and the politics of exceptionalism? How to end not so much art but exceptionalism as art’s structuring logic? How to free art from fascism? The NFT craze, while it doesn’t answer those questions, has the dubious benefit of identifying all of those problems.

_____

Arne De Boever teaches in the School of Critical Studies at the California Institute of the Arts and is the author of Finance Fictions: Realism and Psychosis in a Time of Economic Crisis (Fordham University Press, 2017), Against Aesthetic Exceptionalism (University of Minnesota Press, 2019), and other works. His most recent book is François Jullien’s Unexceptional Thought (Rowman & Littlefield, 2020).

_____

Acknowledgments

Thanks to Alex Robbins, Jared Varava, Makena Janssen, Kulov, and David Golumbia.

_____

[1] See: https://injectiveprotocol.com/.

[2] See: https://news.artnet.com/art-world/financial-traders-burned-banksy-nft-1948855. A video of the burning can be accessed here: https://www.youtube.com/watch?v=C4wm-p_VFh0.

[3] See: https://hyperallergic.com/624053/nft-art-goes-viral-and-heads-to-auction-but-what-is-it/.

[4] A simple explanation of cryptoart’s relation to cryptocurrency can be found here: https://www.youtube.com/watch?v=QlgE_mmbRDk.

[5] Steyerl, Hito. “In Defense of the Poor Image”. e-flux 10 (2009). Available at: https://www.e-flux.com/journal/10/61362/in-defense-of-the-poor-image/.

[6] See: https://www.isaacjulien.com/projects/playtime/.

[7] I am echoing here the title of my book Finance Fictions, where I began to theorize some of what is realized by the NFT artwork: Boever, Arne De. Finance Fictions: Realism and Psychosis in a Time of Economic Crisis. New York: Fordham University Press, 2017.

[8] See: Boever, Arne De. Against Aesthetic Exceptionalism. Minneapolis: University of Minnesota Press, 2019.

[9] See: Benjamin, Walter. “The Work of Art in the Era of Mechanical Reproduction” In: Benjamin, Walter. Illuminations: Essays and Reflections. Ed. Hannah Arendt. Trans. Harry Zohn. New York: Schocken Books, 1969. 217-251.

[10] See: https://www.youtube.com/watch?v=vxkwRNIZgdY&feature=emb_title.

[11] Brenner, Lexa. “The Banksy Effect: Revolutionizing Humanitarian Protest Art”. Harvard International Review XL: 2 (2019): 35-37.

[12] Lerner, Ben. “Damage Control: The Modern Art World’s Tyranny of Price”. Harper’s Magazine 12/2013: 42-49.

[13] Lerner, “Damage Control”, 49.

[14] Duve, Thierry de. Kant After Duchamp. Cambridge: MIT, 1998.

[15] While such judgments are of course always subjective, this article considers a number of good reasons for judging the work as bad art: https://news.artnet.com/opinion/beeple-everydays-review-1951656#.YFKo4eIE7p4.twitter.

[16] The emphasis on materialism here is not meant to obscure the materialism of the digital NFT, namely its ecological footprint which is, like that of bitcoin, devastating.

[17] See Boever, Against Aesthetic Exceptionalism.

[18] On this, see my: “Iconic Intelligence (Or: In Praise of the Sublamental)”. boundary 2 (forthcoming).